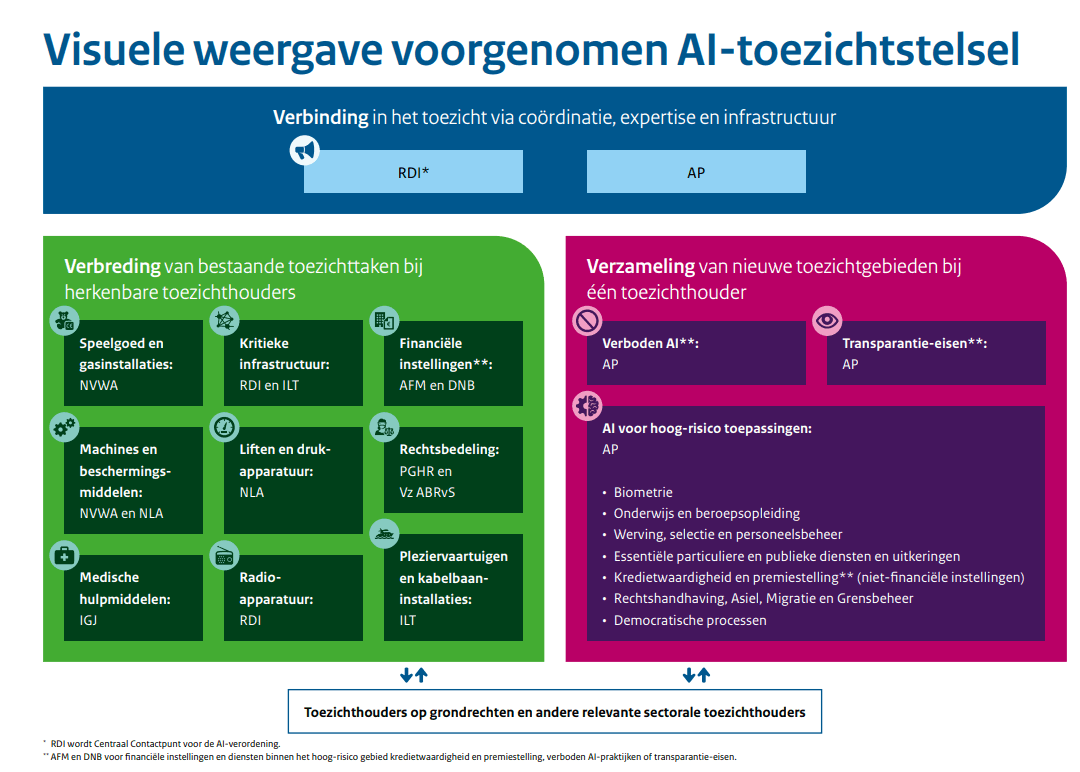

On 20 April 2026, the State Secretary for Digital Economy and Sovereignty published the draft bill for the Implementation Act for the Artificial Intelligence Regulation for public consultation. With this legislative proposal, the Netherlands implements the AI Regulation. The proposal designates which supervisory authorities will be responsible for enforcing the AI Regulation and sets out how supervision will be carried out. In total, ten supervisory authorities will be appointed.

The government opts for a hybrid model in which existing supervisory authorities oversee AI within their respective domains. For example, the Autoriteit Financiële Markten and De Nederlandsche Bank will remain responsible for financial institutions, while the Nederlandse Voedsel- en Warenautoriteit and the Nederlandse Arbeidsinspectie will supervise product safety. In this way, organisations will, as much as possible, interact with supervisory authorities they are already familiar with.

In total, ten organisations will be designated as market surveillance authorities under Article 2.2 of the draft legislation. In addition, the Autoriteit Persoonsgegevens and the Rijksinspectie Digitale Infrastructuur will be assigned a coordinating role within the supervisory framework (see Article 5.4 of the draft bill).

The draft legislation also includes the following visual overview of the distribution of responsibilities among the supervisory authorities:

The Autoriteit Persoonsgegevens is designated as the market surveillance authority for three broad supervisory areas (see Article 2.2 of the draft bill):

prohibited AI practices, such as manipulative AI and social scoring (Article 5 of the AI Regulation);

transparency obligations, including the requirement to make chatbots and deepfakes recognisable (Article 50 of the AI Regulation);

a large part of high-risk AI applications, such as AI used in recruitment and selection, education, benefits administration, law enforcement, and democratic processes (Annex III of the AI Regulation).

The Rijksinspectie Digitale Infrastructuur will act as the central contact point for the AI Regulation at both national and European level (see Article 2.3 of the draft bill).

For organisations developing or using AI, this legislative proposal provides greater clarity on which supervisory authority is competent. At the same time, the obligations become more concrete. For high-risk AI systems, requirements include risk management, data quality, technical documentation, and human oversight (Articles 9–15 of the AI Regulation).

With the designation of supervisory authorities, the Netherlands will also have bodies empowered to impose fines for breaches of the AI Regulation (see Article 3.7 of the draft bill).

For practice, it is also relevant that, in support of innovation, the AP and the RDI will jointly launch an AI regulatory sandbox later this year, allowing organisations to test their AI systems and receive guidance (see Article 4.1 of the draft bill).

After the consultation phase has ended and responses have been processed, the bill will still need to pass both the House of Representatives and the Senate.

You can read the draft bill here.

Do you have any questions? Please contact Menno de Wijs, attorney IT, Privacy & Cybersecurity law.

Would you like a monthly overview of updates and blog posts in your inbox? Subscribe to our newsletter.